Our Model Risk Management Technology

Build better models, increase the efficiency of model development and validation, and improve the planning of your MRM tasks.

A NEW APPROACH TO MANAGING YOUR MODELS

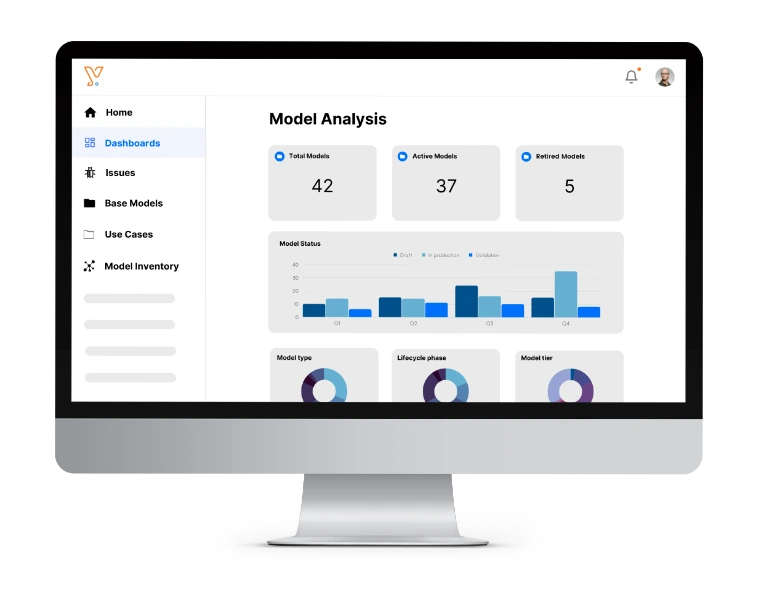

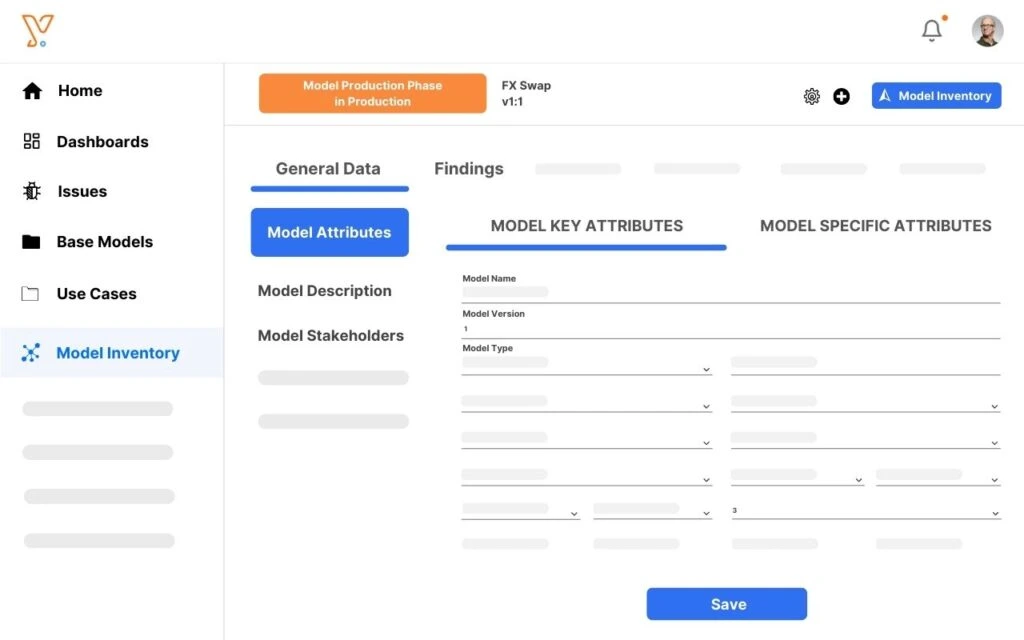

Support your model lifecycle with Chiron Enterprise

Chiron Enterprise combines an interactive model inventory and a configurable workflow engine to enable decision-makers, model developers, and model validators to efficiently collaborate, manage end-to-end business processes, and have full auditability of all events over the model lifecycle.

VALIDATE YOUR MODELS WITH EASE

Automate Model Validation

with Chiron App

Chiron App streamlines model testing, validation, and documentation through automation. It features MRM-specific analytics such as benchmarking, backtesting, and data quality analysis. Chiron App also keeps track of versions and facilitates reproducing past results.

Key features

A dedicated and comprehensive MRM solution to boost the efficiency of model development and validation teams.

Automate Model Validation

Models are tested at scale through the execution of standardised reusable routines.

Enhance Collaboration

Encourage real-time collaboration between model developers and validators and prevent miscommunication.

Automate Model Documentation

Model documentation is often poorly written, meaning it is inadequate to support a rigorous validation effort.

Manage end-to-end Processes

Scale Model Risk Management processes and configure workflows in a no-code manner.

Top picks for you

Gain insights on the latest trends in model risk management technology.